Introduction

Welcome to part 2 of my LLM deep dive. If you've not read part 1, I highly encourage you to check it out first.

Previously, we covered the first two major stages of training an LLM:

- Pre-training - Learning from massive datasets to form a base model.

- Supervised fine-tuning (SFT) - Refining the model with curated examples to make it useful.

Now, we're diving into the next major stage: Reinforcement Learning (RL). While pre-training and SFT are well-established, RL is still evolving but has become a critical part of the training pipeline.

I've taken reference from Andrej Karpathy's widely popular 3.5-hour YouTube. Andrej is a founding member of OpenAI, his insights are gold - you get the idea.

Let's go 🚀

What's the purpose of reinforcement learning (RL)?

Humans and LLMs process information differently. What's intuitive for us - like basic arithmetic - may not be for an LLM, which only sees text as sequences of tokens. Conversely, an LLM can generate expert-level responses on complex topics simply because it has seen enough examples during training.

This difference in cognition makes it challenging for human annotators to provide the "perfect" set of labels that consistently guide an LLM toward the right answer.

RL bridges this gap by allowing the model to learn from its own experience.

Instead of relying solely on explicit labels, the model explores different token sequences and receives feedback - reward signals - on which outputs are most useful. Over time, it learns to align better with human intent.

Intuition behind RL

LLMs are stochastic - meaning their responses aren't fixed. Even with the same prompt, the output varies because it's sampled from a probability distribution.

We can harness this randomness by generating thousands or even millions of possible responses in parallel. Think of it as the model exploring different paths - some good, some bad. Our goal is to encourage it to take the better paths more often.

To do this, we train the model on the sequences of tokens that lead to better outcomes. Unlike supervised fine-tuning, where human experts provide labeled data, reinforcement learning allows the model to learn from itself.

The model discovers which responses work best, and after each training step, we update its parameters. Over time, this makes the model more likely to produce high-quality answers when given similar prompts in the future.

But how do we determine which responses are best? And how much RL should we do? The details are tricky, and getting them right is not trivial.

RL is not "new" — it can surpass human expertise (AlphaGo, 2016)

A great example of RL's power is DeepMind's AlphaGo, the first AI to defeat a professional Go player and later surpass human-level play.

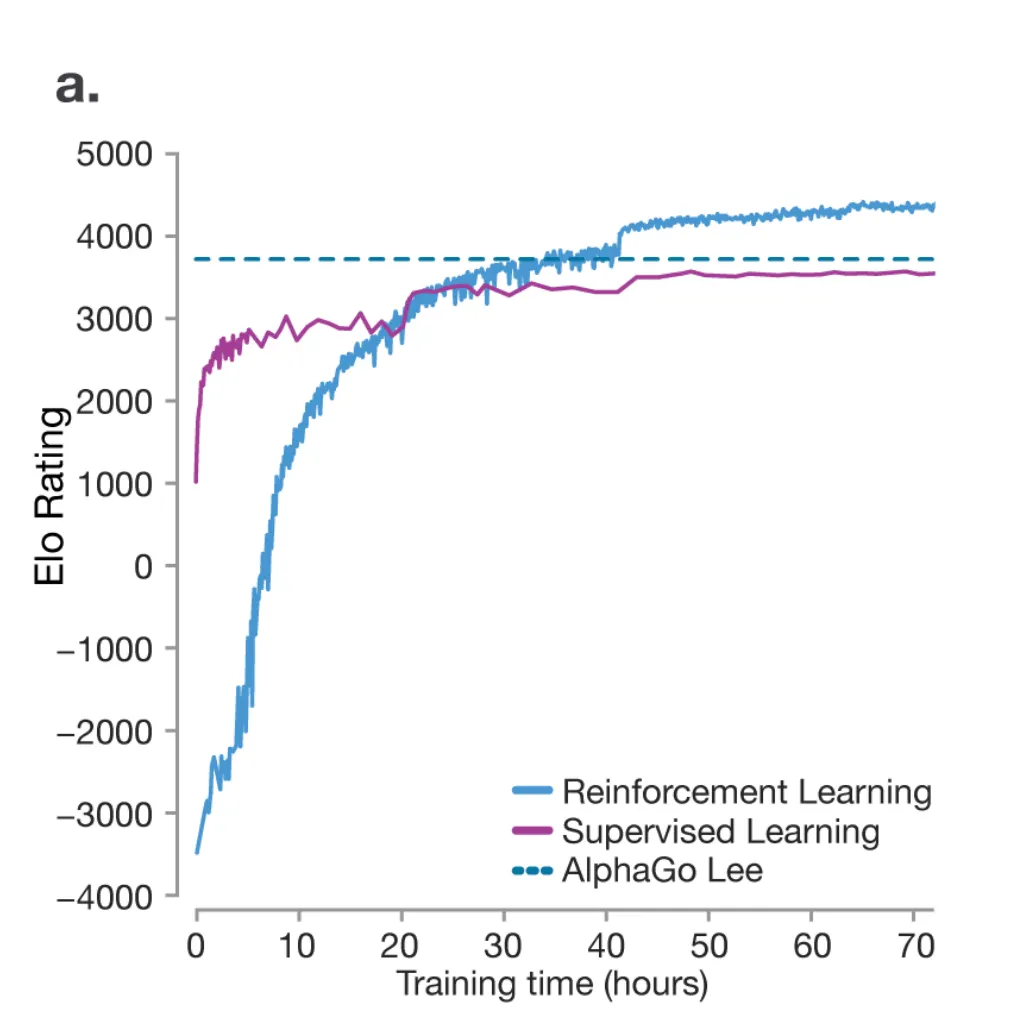

In the 2016 Nature paper (graph below), when a model was trained purely by SFT (giving the model tons of good examples to imitate from), the model was able to reach human-level performance, but never surpass it.

The dotted line represents Lee Sedol's performance - the best Go player in the world.

This is because SFT is about replication, not innovation - it doesn't allow the model to discover new strategies beyond human knowledge.

However, RL enabled AlphaGo to play against itself, refine its strategies, and ultimately exceed human expertise (blue line).

RL represents an exciting frontier in AI - where models can explore strategies beyond human imagination when we train it on a diverse and challenging pool of problems to refine it's thinking strategies.

RL foundations recap

Let's quickly recap the key components of a typical RL setup:

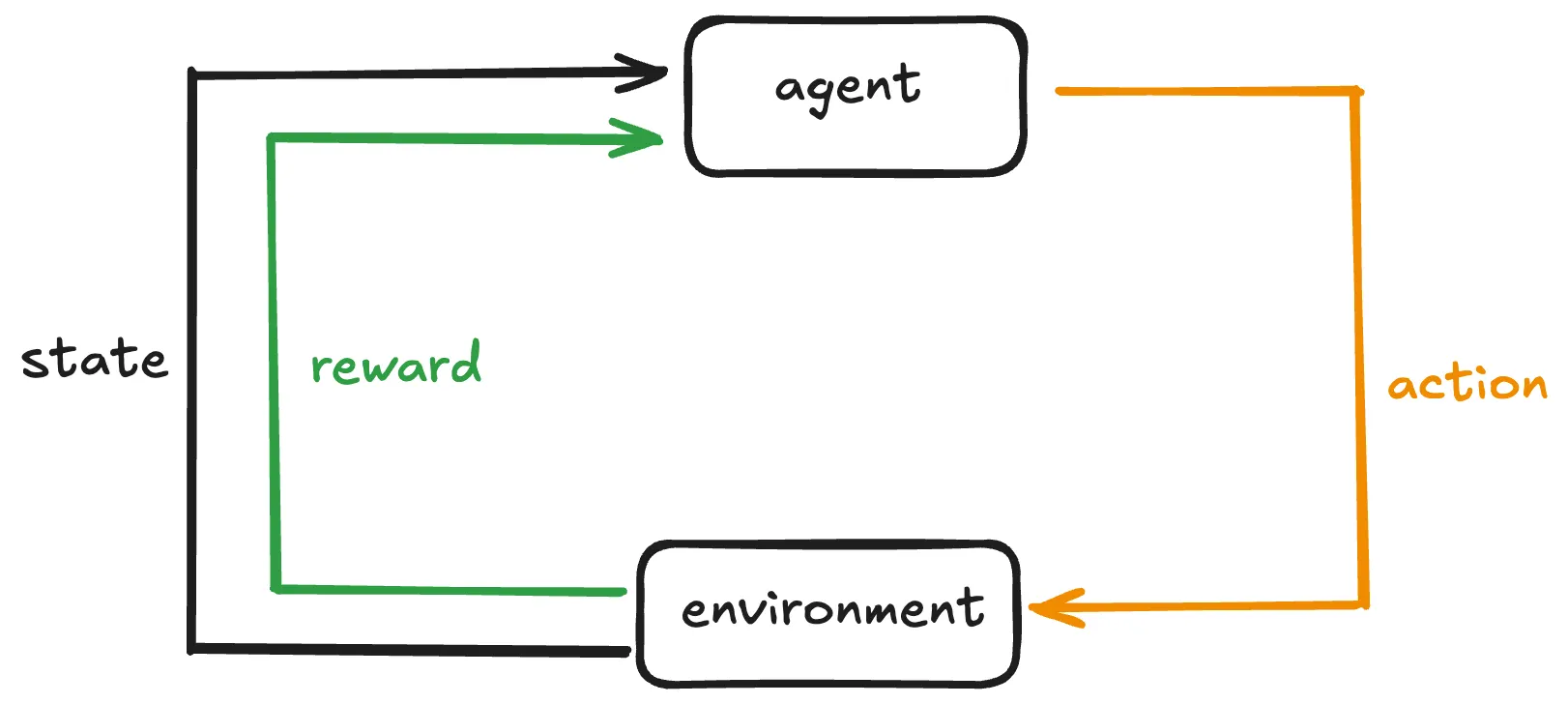

Agent - The learner or decision maker. It observes the current situation (state), chooses an action, and then updates its behaviour based on the outcome (reward).

Environment - the external system in which the agent operates.

State - a snapshot of the environment at a given step t.

At each timestamp, the agent performs an action in the environment that will change the environment's state to a new one. The agent will also receive feedback indicating how good or bad the action was.

This feedback is called a reward, and is represented in a numerical form. A positive reward encourages that behaviour, and a negative reward discourages it.

By using feedback from different states and actions, the agent gradually learns the optimal strategy to maximise the total reward over time.

Policy

The policy is the agent's strategy. If the agent follows a good policy, it will consistently make good decisions, leading to higher rewards over many steps.

In mathematical terms, it is a function that determines the probability of different outputs for a given state - πθ(a|s).

Value function

An estimate of how good it is to be in a certain state, considering the long term expected reward. For an LLM, the reward might come from human feedback or a reward model.

Actor-Critic architecture

It is a popular RL setup that combines two components:

- Actor - Learns and updates the policy (πθ), deciding which action to take in each state.

- Critic - Evaluates the value function (V(s)) to give feedback to the actor on whether its chosen actions are leading to good outcomes.

How it works:

- The actor picks an action based on its current policy.

- The critic evaluates the outcome (reward + next state) and updates its value estimate.

- The critic's feedback helps the actor refine its policy so that future actions lead to higher rewards.

Putting it all together for LLMs

The state can be the current text (prompt or conversation), and the action can be the next token to generate. A reward model (eg. human feedback), tells the model how good or bad it's generated text is.

The policy is the model's strategy for picking the next token, while the value function estimates how beneficial the current text context is, in terms of eventually producing high quality responses.

DeepSeek-R1 (published 22 Jan 2025)

To highlight RL's importance, let's explore DeepSeek-R1, a reasoning model achieving top-tier performance while remaining open-source. The paper introduced two models: DeepSeek-R1-Zero and DeepSeek-R1.

- DeepSeek-R1-Zero was trained solely via large-scale RL, skipping supervised fine-tuning (SFT).

- DeepSeek-R1 builds on it, addressing encountered challenges.

Let's dive into some of these key points.

RL algo: Group Relative Policy Optimisation (GRPO)

One key game changing RL algorithm is Group Relative Policy Optimisation (GRPO), a variant of the widely popular Proximal Policy Optimisation (PPO). GRPO was introduced in the DeepSeekMath paper in Feb 2024.

Why GRPO over PPO?

PPO struggles with reasoning tasks due to:

- Dependency on a critic model. PPO needs a separate critic model, effectively doubling memory and compute. Training the critic can be complex for nuanced or subjective tasks.

- High computational cost as RL pipelines demand substantial resources to evaluate and optimise responses.

- Absolute reward evaluations When you rely on an absolute reward - meaning there's a single standard or metric to judge whether an answer is "good" or "bad" - it can be hard to capture the nuances of open-ended, diverse tasks across different reasoning domains.

How GRPO addressed these challenges:

GRPO eliminates the critic model by using relative evaluation - responses are compared within a group rather than judged by a fixed standard.

Imagine students solving a problem. Instead of a teacher grading them individually, they compare answers, learning from each other. Over time, performance converges toward higher quality.

How does GRPO fit into the whole training process?

GRPO modifies how loss is calculated while keeping other training steps unchanged:

- Gather data (queries + responses)

- For LLMs, queries are like questions

- The old policy (older snapshot of the model) generates several candidate answers for each query

- Assign rewards - each response in the group is scored (the "reward").

- Compute the GRPO loss Traditionally, you'll compute a loss - which shows the deviation between the model prediction and the true label. In GRPO, however, you measure: a) How likely is the new policy to produce past responses? b) Are those responses relatively better or worse? c) Apply clipping to prevent extreme updates. This yields a scalar loss.

- Back propagation + gradient descent

- Back propagation calculates how each parameter contributed to loss

- Gradient descent updates those parameters to reduce the loss

- Over many iterations, this gradually shifts the new policy to prefer higher reward responses

- Update the old policy occasionally to match the new policy. This refreshes the baseline for the next round of comparisons.

Chain of thought (CoT)

Traditional LLM training follows pre-training → SFT → RL. However, DeepSeek-R1-Zero skipped SFT, allowing the model to directly explore CoT reasoning.

Like humans thinking through a tough question, CoT enables models to break problems into intermediate steps, boosting complex reasoning capabilities. OpenAI's o1 model also leverages this, as noted in its September 2024 report: o1's performance improves with more RL (train-time compute) and more reasoning time (test-time compute).

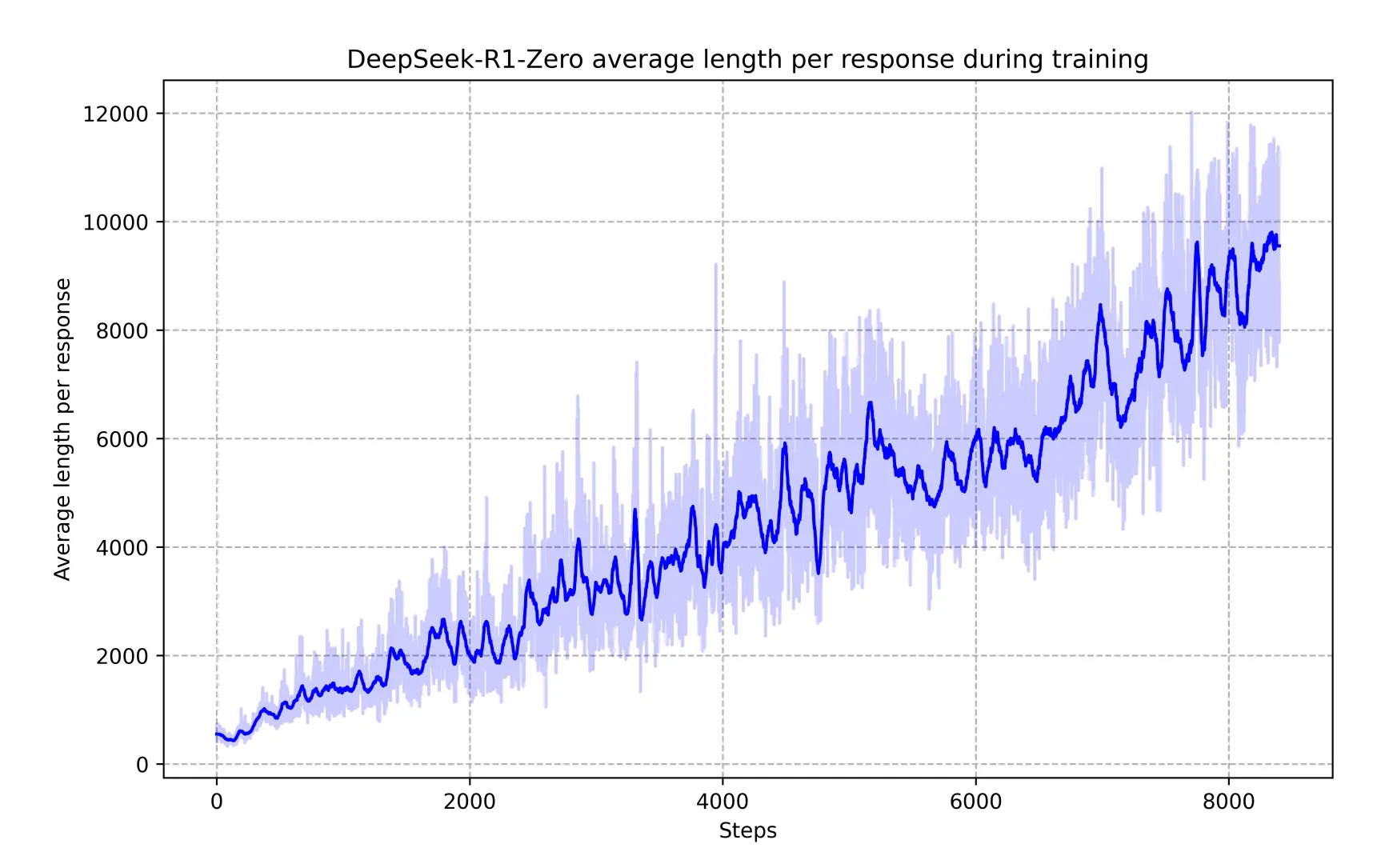

DeepSeek-R1-Zero exhibited reflective tendencies, autonomously refining its reasoning.

A key graph (below) in the paper showed increased thinking during training, leading to longer (more tokens), more detailed and better responses.

Without explicit programming, it began revisiting past reasoning steps, improving accuracy. This highlights chain-of-thought reasoning as an emergent property of RL training.

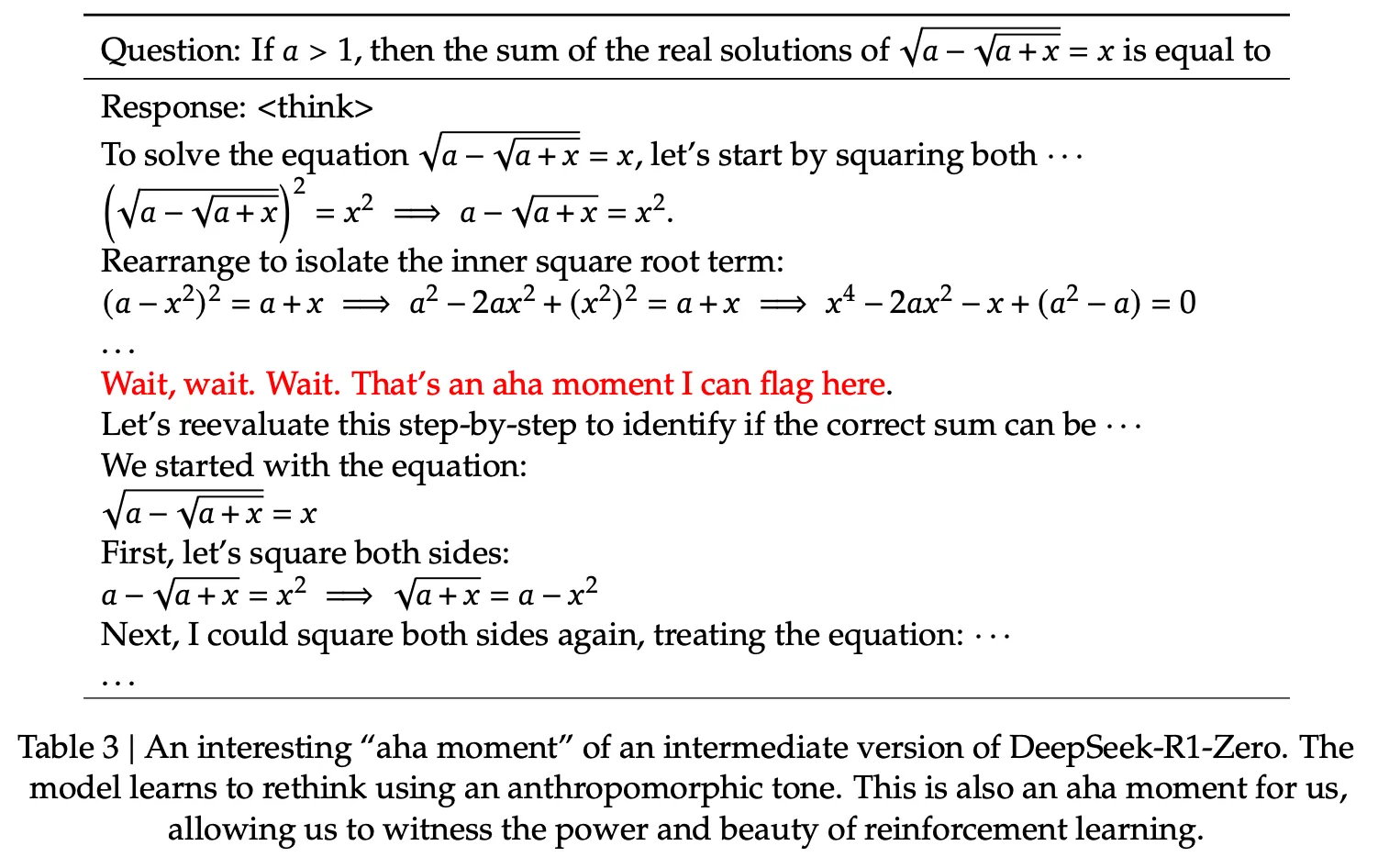

The model also had an "aha moment" (below) - a fascinating example of how RL can lead to unexpected and sophisticated outcomes.

Note: Unlike DeepSeek-R1, OpenAI does not show full exact reasoning chains of thought in o1 as they are concerned about a distillation risk - where someone comes in and tries to imitate those reasoning traces and recover a lot of the reasoning performance by just imitating. Instead, o1 just summaries of these chains of thoughts.

Reinforcement Learning with Human Feedback (RLHF)

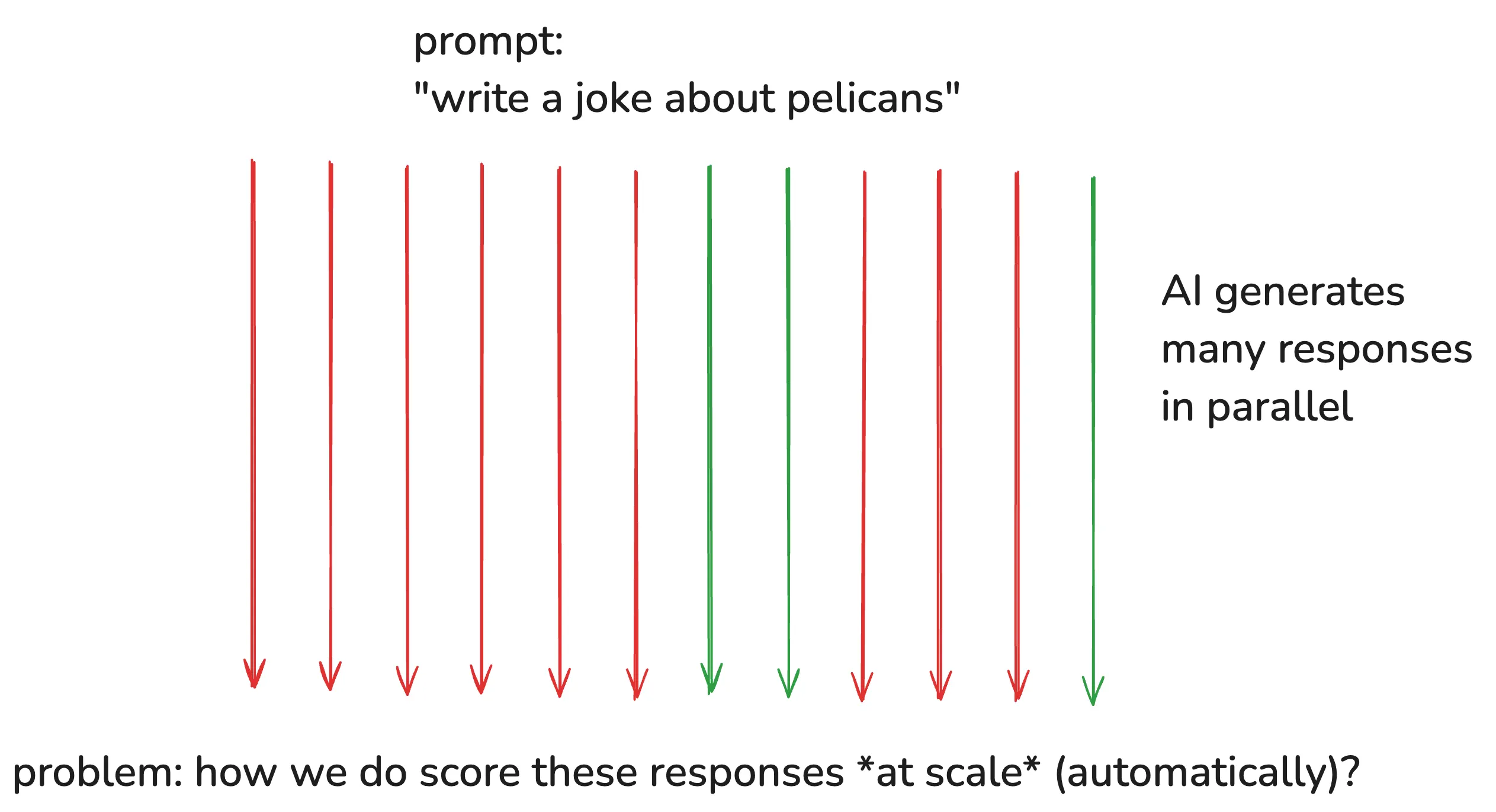

For tasks with verifiable outputs (e.g., math problems, factual Q&A), AI responses can be easily evaluated. But what about areas like summarisation or creative writing, where there's no single "correct" answer?

This is where human feedback comes in - but naïve RL approaches are unscalable.

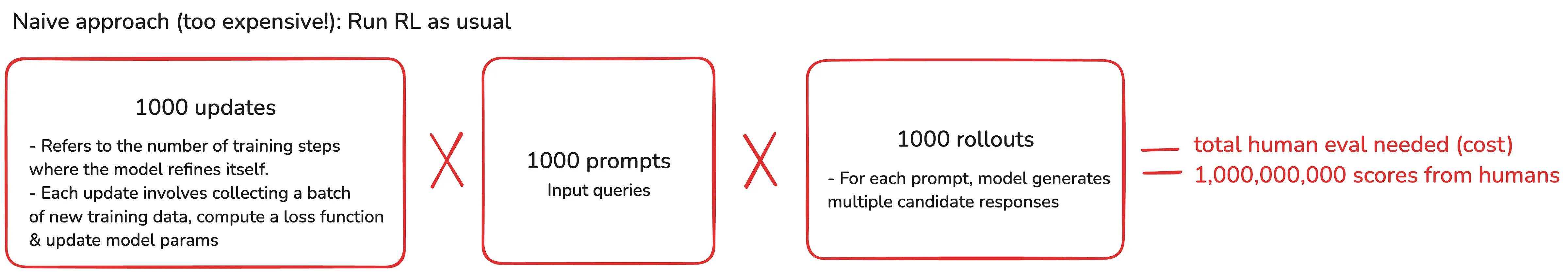

The problem with naive approaches

Let's look at the naive approach with some arbitrary numbers.

That's one billion human evaluations needed! This is too costly, slow and unscalable.

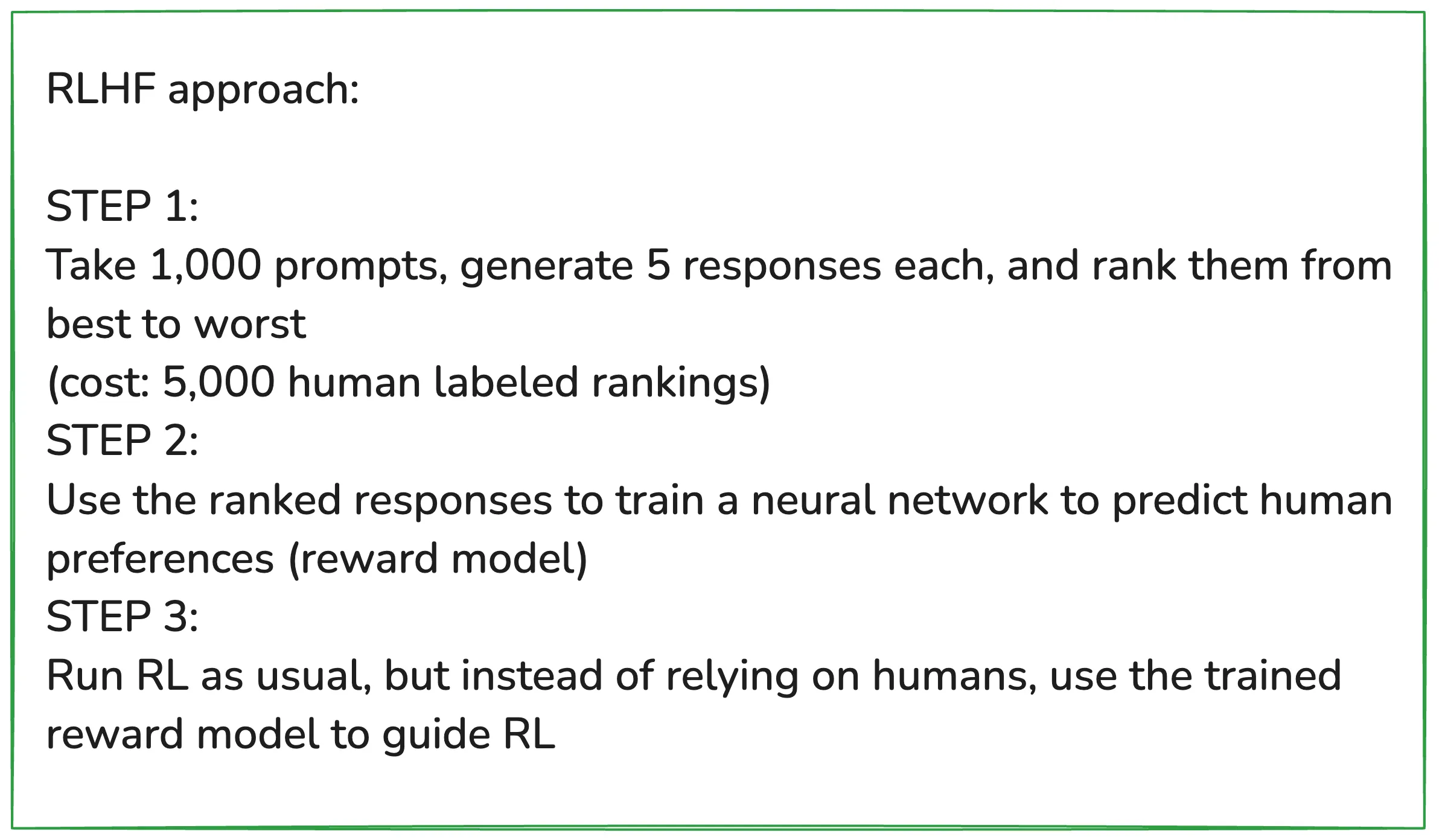

A smarter solution: train an AI reward model

Hence, a smarter solution is to train an AI "reward model" to learn human preferences, dramatically reducing human effort.

Ranking responses is also easier and more intuitive than absolute scoring.

Upsides

- Can be applied to any domain, including creative writing, poetry, summarisation, and other open-ended tasks.

- Ranking outputs is much easier for human labellers than generating creative outputs themselves.

Downsides

- The reward model is an approximation - it may not perfectly reflect human preferences.

- RL is good at gaming the reward model - if run for too long, the model might exploit loopholes, generating nonsensical outputs that still get high scores.

Do note that RLHF is not the same as traditional RL.

For empirical, verifiable domains (e.g., math, coding), RL can run indefinitely and discover novel strategies. RLHF, on the other hand, is more like a fine-tuning step to align models with human preferences.

To sum up

And that's a wrap! I hope you enjoyed Part 2 :) If you haven't already read part 1 - do check it out here.

Got questions or ideas for what I should cover next? Drop them in the comments - I'd love to hear your thoughts. See you in the next article!